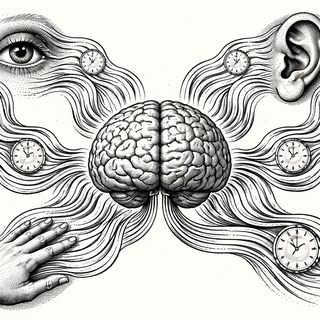

Your brain handles five completely different senses. Vision works with photons hitting your retina. Hearing decodes pressure waves in the air. Touch registers mechanical forces on your skin. Smell and taste involve molecular detection. For decades, researchers studied each of these modalities separately, documenting all the specialized circuits and processing tricks that each one uses.

But what if they're all built on the same foundation? A review in the Annual Review of Psychology proposes that time, the patterns and sequences of when things happen, might be the unifying principle that organizes sensory experience across all modalities. Different instruments, same sheet music.

The Temporal Scaffolding Idea

Here's the core claim: detecting and exploiting temporal patterns may be the fundamental mechanism your brain uses to make sense of sensory input, regardless of which sense is delivering that input.

This sounds abstract, so let's make it concrete. When you watch someone walking, you're not seeing a random collection of shapes. You're seeing a sequence that unfolds over time in a predictable way. Leg forward, other leg forward, arm swing, repeat. The temporal structure tells you "that's walking."

When you listen to someone speak, the meaning comes from the sequence of sounds over time. Switch the order around and you get nonsense. The temporal pattern is the message.

When you feel an object in your hand, you don't get all the tactile information at once. You run your fingers over it, and the sequence of sensations over time tells you about the shape and texture. Again, temporal patterns.

What the review argues is that this isn't just coincidence. Time might be the actual scaffolding that the brain uses to build organized perceptions across all sensory channels.

The Impossible Problem That Becomes Possible

There's a computational reason to take this idea seriously. Interpreting sensory input is, from a mathematical standpoint, ridiculously hard. The patterns of light hitting your eyes are compatible with infinitely many possible scenes. The sound waves reaching your ears could be produced by countless different sources. Yet your brain somehow arrives at the right interpretation almost instantly.

How? One answer is that the brain exploits regularities in the natural world to narrow down the possibilities. And many of those regularities are temporal. Things that go together tend to happen at similar times. Causes precede effects. Natural events have characteristic rhythms and sequences.

If your brain is tuned to detect these temporal patterns, it can use them as shortcuts for figuring out what's actually happening. The impossible interpretation problem becomes tractable because most logically possible interpretations would violate the temporal regularities that the real world actually follows.

This is where the review gets interesting from a theoretical perspective. The authors bring together insights from neurophysiology, cognitive neuroscience, and theoretical computer science to build a coherent picture of how temporal scaffolding might work.

Neurons Are Obsessed With Timing

At the neural level, there's plenty of evidence that timing matters enormously. Neurons aren't just counting spikes. They're paying attention to when those spikes arrive. The timing relationships between different neurons' activity carry information above and beyond the raw firing rates.

Many neural circuits seem designed to detect coincidences, things happening at the same time. Others specialize in detecting sequences, things happening in particular orders. The brain has extensive machinery for representing and processing temporal structure.

If time is the scaffolding for sensory organization, you'd expect exactly this kind of neural investment in temporal processing. And that's what we see.

Babies and Development

One of the interesting implications of this framework concerns development. How do babies learn to organize their perceptions? How does the blooming, buzzing confusion of infancy turn into the structured sensory experience of an adult?

If temporal scaffolding is fundamental, maybe the answer involves learning temporal regularities. Babies could be detecting what predicts what, what goes with what, which sequences are meaningful versus random. The statistical learning that infants are famous for might be, at its core, temporal pattern learning.

This gives developmental researchers a specific framework for understanding how perception gets organized. It's not just about learning to recognize objects or sounds. It's about learning the temporal structure of the sensory world.

When the Scaffolding Goes Wrong

On the clinical side, if temporal scaffolding is fundamental to sensory organization, then problems with temporal processing might manifest as broad sensory difficulties. Some sensory processing disorders might be, at root, disorders of temporal scaffolding.

This is speculative, but it suggests new angles for understanding and potentially treating conditions where sensory experience seems disorganized or overwhelming. If the underlying problem is in how the brain handles temporal patterns, interventions that target temporal processing specifically might be more effective than those that focus on individual sensory modalities.

Not Replacing the Old, Adding the New

The temporal scaffolding hypothesis doesn't throw out everything we know about modality-specific processing. Your visual cortex and auditory cortex are genuinely different. They use different types of neurons, different circuit architectures, different processing strategies for the specific challenges of their input.

But underneath that specialization, there might be shared computational logic. All the modalities might be using temporal structure as an organizing principle, even as they implement that principle in different ways given their different constraints.

Understanding this deeper unity could help us build better models of perception. Current artificial systems often handle each sensory modality separately, then try to integrate them later. But if human brains organize perception around time from the start, maybe AI systems should too.

Time Will Tell

The temporal scaffolding idea is a framework, not a proven theory. It's a way of organizing existing findings and generating new predictions. The question is whether it holds up as researchers put it to the test.

If it does, it would represent a significant shift in how we think about perception. Instead of treating vision, hearing, touch, smell, and taste as fundamentally separate systems that occasionally need to talk to each other, we'd see them as variations on a common temporal theme. Different instruments playing from the same sheet music, united by the rhythm of time.

And that would be a pretty elegant answer to the question of how one brain handles five completely different senses. The secret might have been ticking away the whole time.

Reference: Sinha P, et al. (2025). The Temporal Scaffolding of Sensory Organization. Annual Review of Psychology. doi: 10.1146/annurev-psych-032525-040352 | PMID: 41032576

Disclaimer: The image accompanying this article is for illustrative purposes only and does not depict actual experimental results, data, or biological mechanisms.