You know that friend who insists on reading movie spoilers but then only reads the first sentence? That's basically how neuroscience has been analyzing optogenetics experiments. Researchers have this jaw-dropping tool that lets them flip brain cells on and off with literal light beams, and then they run a t-test and call it a day. Wild, right?

A new paper from Gabriel Loewinger, Alexander Levis, and Francisco Pereira just called out this whole situation and offered a smarter way to actually listen to what the data is trying to tell us.

Wait, What's Optogenetics Again?

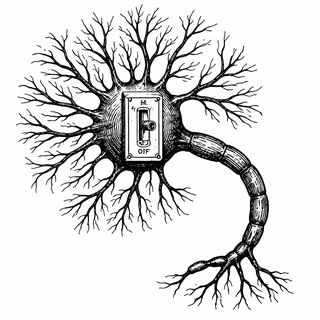

Quick refresher: optogenetics is the neuroscience equivalent of installing a light switch in individual brain cells. Scientists genetically engineer neurons to produce light-sensitive proteins (borrowed from algae, because nature is a beautiful weirdo), then shine light through tiny fiber optics to turn those specific neurons on or off. It's so precise that it won 2013's Brain Prize and the 2021 Lasker Award, and people keep whispering "Nobel" every October (Deisseroth, 2015).

There are two flavors of these experiments. Open-loop designs are like setting a playlist on shuffle: light pulses fire on a fixed schedule regardless of what the animal is doing. Closed-loop designs are more like a DJ reading the room: stimulation adapts in real time based on the animal's brain activity or behavior (Grosenick et al., 2015).

The Problem With "Just Run a T-Test"

Here's where things get spicy. The standard playbook for optogenetics analysis goes something like: compare average behavior in the "light on" group versus the "light off" group, slap a p-value on it, publish. It's the statistical equivalent of judging a movie by its poster.

This approach throws away a treasure trove of trial-by-trial information. Does the effect of stimulation build up over repeated pulses? Does it wear off after a few trials? Is there a dose-response relationship, or does the brain hit a ceiling and say "enough already"? A simple group comparison can't touch any of these questions. It's like having a conversation by only reading subject lines.

The problem gets thornier with closed-loop experiments, where what the animal did on trial 5 affects whether stimulation happens on trial 6, which influences behavior on trial 7. This creates tangled feedback loops that make standard statistics about as useful as a chocolate teapot.

Borrowing Brains From... Smartphone Apps?

Loewinger and colleagues found their solution in an unlikely place: mobile health apps. Researchers studying smartphone-based health interventions (think: "Should we send a motivational text right now?") had already grappled with a similar puzzle. They developed something called causal excursion effects, a way to measure what happens when you briefly "excurse" from a treatment protocol, rather than comparing two entirely different treatment histories (Boruvka et al., 2018).

The team adapted this framework to optogenetics and built a whole taxonomy of questions you can now actually answer:

- Blip effects: What does a single zap do to behavior on the very next trial?

- Effect dissipation: Does the effect linger like a good coffee, or vanish like your motivation on a Monday?

- Dose-response: Do successive stimulations stack, or does the brain plateau?

- Additive vs. antagonistic: Do two stimulations play nicely together, or cancel each other out?

They backed all of this up with serious statistical machinery: inverse probability-weighted estimators, multiply-robust estimators for when you're not sure your model is right (spoiler: it never is), and a scalable computational implementation so you don't need a supercomputer to run the analysis.

What They Actually Found

When the team applied their methods to data from an actual optogenetics experiment, effects that were completely invisible under standard analyses popped right out. The conventional approach had been staring at the data and seeing nothing; the new framework looked at the same data and found meaningful trial-level causal dynamics hiding in plain sight.

This matters because optogenetics is supposed to be neuroscience's gold standard for establishing cause and effect in neural circuits. If your analysis toolkit can only ask "did the light do something?" you're leaving most of the story on the cutting room floor. The real questions are how, when, for how long, and in what pattern these circuits shape behavior (Loewinger et al., 2026).

Why You Should Care

Optogenetics is already being explored as a potential therapy for conditions like Parkinson's disease, epilepsy, and depression. As these applications move closer to humans, the ability to precisely characterize how neural stimulation changes behavior over time isn't just an academic exercise. It could mean the difference between a treatment protocol that works and one that looks good in a group average but misses the nuances that matter for individual patients.

So yes, the stats nerds just made the laser-brain people's work significantly more powerful. And honestly? That's the kind of crossover episode we need more of.

References

-

Loewinger, G., Levis, A. W., & Pereira, F. (2026). Nonparametric Causal Inference for Optogenetics: Sequential Excursion Effects for Dynamic Regimes. Journal of the American Statistical Association. DOI: 10.1080/01621459.2026.2635071

-

Deisseroth, K. (2015). Optogenetics: 10 years of microbial opsins in neuroscience. Nature Neuroscience, 18, 1213-1225. DOI: 10.1038/nn.4091. PMID: 26308982

-

Grosenick, L., Marshel, J. H., & Deisseroth, K. (2015). Closed-Loop and Activity-Guided Optogenetic Control. Neuron, 86(1), 106-139. DOI: 10.1016/j.neuron.2015.03.034. PMCID: PMC4775736

-

Boruvka, A., Almirall, D., Witkiewitz, K., & Murphy, S. A. (2018). Assessing Time-Varying Causal Effect Moderation in Mobile Health. Journal of the American Statistical Association, 113(523), 1112-1121. DOI: 10.1080/01621459.2017.1305274

-

Klasnja, P., Hekler, E. B., Shiffman, S., Boruvka, A., Almirall, D., Tewari, A., & Murphy, S. A. (2015). Micro-Randomized Trials: An Experimental Design for Developing Just-in-Time Adaptive Interventions. Health Psychology, 34(Suppl), 1220-1228. DOI: 10.1037/hea0000305. PMCID: PMC4732571

Disclaimer: The image accompanying this article is for illustrative purposes only and does not depict actual experimental results, data, or biological mechanisms.