You don't often see researchers publicly declare their own field dead. It's bad for grants, awkward at conferences, and generally not a great career move. So when Giacomo Indiveri, one of the pioneers of neuromorphic engineering, publishes a commentary titled "Neuromorphic is dead" in Neuron, you know something interesting is going on.

But don't start preparing the funeral flowers just yet. Like all good provocative titles, this one has a twist. The full statement is "Neuromorphic is dead. Long live neuromorphic." This isn't a eulogy. It's a call to stop what the field has become and return to what it was supposed to be.

First, What Even Is Neuromorphic Computing?

Let's back up. The original vision of neuromorphic engineering dates to the 1980s, and it was genuinely cool. The idea was to build computing systems that didn't just calculate like brains but actually worked like brains. We're talking analog circuits, continuous signals, and architectures that mimicked the physical principles of biological neurons.

Why bother? Consider the numbers. Your brain runs on roughly 20 watts. That's like a dim light bulb. Meanwhile, the data centers running modern AI systems consume megawatts. We're talking about power consumption differences of five or six orders of magnitude for tasks that your brain handles without breaking a sweat.

Clearly, evolution figured something out about efficient information processing that our silicon engineers haven't cracked. The neuromorphic vision was to steal those secrets by building hardware that actually operates on brain-like principles.

Then AI Happened, and Everything Got Weird

Here's where the story gets messy. Artificial intelligence took off in a big way, and suddenly everyone wanted a piece of the "neuro" branding. The term "neuromorphic" started getting slapped on anything vaguely brain-adjacent.

Running neural networks on conventional digital hardware? Neuromorphic! Building chips optimized to do matrix multiplication faster for machine learning? Neuromorphic! Anything with "neuro" in the name that sounds like it might be related to intelligence? Absolutely neuromorphic!

The problem is that most of this stuff has nothing to do with the original vision. Digital systems running artificial neural networks don't capture what makes biological neural networks special. They're not analog. They don't have the physical constraints of real neurons. They don't embody the principles that evolution refined over billions of years.

Indiveri argues this isn't just marketing confusion; it's a genuine loss for the field. By diluting the term to mean "anything with neural network vibes," we've abandoned the original and potentially more valuable research direction. Calling a digital neural network implementation "neuromorphic" is like calling a photograph of a bird "aviation." Sure, there's a conceptual connection, but you're missing the point entirely.

The Original Magic We Forgot

What made the original neuromorphic vision special wasn't just inspiration from brains. It was actually trying to copy the physical principles. Real neurons communicate with analog signals. They have inherent noise and variability. They're embedded in physical substrates with real-world constraints like power consumption, heat dissipation, and signal propagation delays.

These aren't bugs that biological brains have to work around. Evolution turned them into features. The brain's incredible efficiency comes partly from exploiting these physical properties rather than fighting them. Digital computing, by contrast, works by abstracting away the physical layer as much as possible.

When neuromorphic computing abandoned analog approaches in favor of digital implementations, it threw away exactly the stuff that might make brain-inspired computing genuinely revolutionary. We kept the branding and ditched the substance.

What a Revival Would Look Like

Indiveri's commentary advocates returning to the field's roots. This means actually building analog systems that physically mimic neuronal behavior, not just simulating neurons on digital hardware. It means integrating more deeply with fundamental neuroscience to understand which biological principles are worth copying and which are just evolutionary quirks.

Most importantly, it means recognizing that brain-inspired computing shouldn't just mean "running neural networks faster." The goal isn't to accelerate existing AI approaches; it's to develop fundamentally different computing paradigms that might unlock efficiencies we can't achieve with digital systems.

This is harder than the diluted version of neuromorphic computing. It requires genuinely interdisciplinary work spanning physics, electrical engineering, and neuroscience. It requires understanding biology well enough to know what to copy and what to ignore. It requires accepting that we might need to think about computation in ways that don't map neatly onto conventional computer science.

The Case for Taking "Neuro" Seriously

The takeaway here isn't that neuromorphic computing was a bad idea. It's that a good idea got corrupted by marketing pressures and the gravitational pull of the AI hype machine. There might be something genuinely valuable in the original vision, something that could lead to computers that are orders of magnitude more efficient than what we have now.

But capturing that value requires taking the "neuro" part seriously. Not as a branding exercise, but as a design philosophy rooted in understanding how biological brains actually work. You can't get there by calling conventional digital systems neuromorphic and hoping nobody notices the mismatch.

The king is dead. The question is whether we have the discipline to bring back what made the original monarchy worth having.

Reference: Indiveri G. (2025). Neuromorphic is dead. Long live neuromorphic. Neuron. doi: 10.1016/j.neuron.2025.09.020 | PMID: 41045930

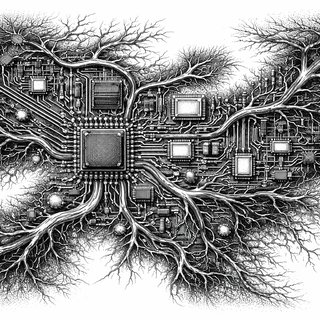

Disclaimer: The image accompanying this article is for illustrative purposes only and does not depict actual experimental results, data, or biological mechanisms.