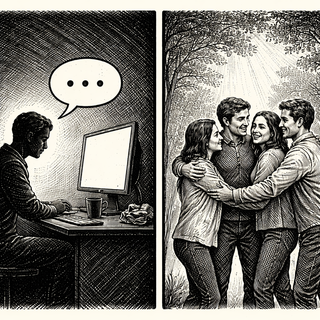

Loneliness has hit epidemic proportions in modern societies. We're more connected than ever through our devices while somehow feeling more isolated than ever in our actual lives. Enter AI chatbots, stage left, with what looks like a perfect solution. They're always available, never judgmental, infinitely patient, and willing to discuss your 2 AM existential spiral about whether your cat truly loves you.

So why not just befriend an algorithm and call it fixed? A perspective in Trends in Cognitive Sciences explains why your brain isn't going to fall for this convenient workaround.

The Very Tempting Promise of Digital Companionship

Let's be fair to the AI chatbots here. They're genuinely impressive conversationalists. Modern large language models remember what you told them last week, they validate your feelings with apparent sincerity, they never ghost you after a deep conversation, and they're constitutionally incapable of the petty betrayals that real humans specialize in.

For lonely people, the appeal is obvious. No social anxiety about whether you're being boring. No fear of rejection. No navigating complicated social dynamics. Just a patient, always-available entity that seems genuinely interested in your thoughts.

Some early studies even suggest that LLM interactions can temporarily ease feelings of loneliness. If that effect were robust and lasting, we'd have a scalable solution to a massive public health crisis. Just download an app and chat your way out of social isolation. Wouldn't that be convenient?

The Ancient Wiring Your Brain Is Running

Here's where cognitive neuroscience shows up to complicate the story. Human brains evolved over millions of years in social environments where connection meant survival. Our neural circuitry for attachment, belonging, and social bonding developed long before anyone conceived of language models.

That circuitry is looking for specific cues: physical presence, touch, shared space, the vulnerability of genuine give-and-take, the risk of rejection that comes with real relationships. These aren't arbitrary preferences. They're deep evolutionary signals that your brain uses to determine whether a social connection is "real."

An AI chatbot can simulate conversation brilliantly. What it can't simulate is being another conscious entity with its own interests, vulnerabilities, and the genuine capacity to be affected by your existence. Your brain, on some level, knows the difference even when your conscious mind is enjoying the conversation.

It's a bit like trying to satisfy hunger with pictures of delicious food. Your eyes might be entertained, but your stomach knows you're faking.

Loneliness Is More Than Just "No One to Talk To"

Here's a common misconception: loneliness is about lacking conversation partners. If that were true, AI chatbots would be a reasonable solution. But loneliness is actually about unmet needs for belonging, intimacy, and connection with beings who have their own independent existence.

Part of what makes human relationships meaningful is that the other person chooses to be there. They have their own life, their own concerns, their own reasons for investing time in you. When a friend supports you through a hard time, that support means something precisely because they could have been doing something else. They chose you.

An AI programmed to be supportive is doing what it was designed to do. There's no choice, no sacrifice, no genuine investment. The supportiveness is a feature, not a relationship. Your brain's social circuitry, evolved to distinguish genuine allies from mere simulations, isn't easily fooled.

The Uncomfortable Implications

The authors of this perspective argue that real solutions to loneliness require societal changes, not technological band-aids. We need cities designed for human connection rather than efficient isolation. We need public spaces that foster genuine community rather than transactional interactions. We need fewer barriers to social participation for people who are elderly, disabled, economically marginalized, or otherwise pushed to the edges of social networks.

These solutions are hard. They require political will, resource allocation, and changes to how we organize society. They're definitely less convenient than downloading an app.

But here's the risk of treating AI companionship as a solution: it might let us off the hook for doing the actual work. If lonely people can get just enough relief from chatbots to stop complaining, society loses pressure to address the underlying causes of isolation.

The Nuanced Truth

This isn't to say AI chatbots are worthless for lonely people. They might provide meaningful stopgaps. They might be helpful as supplements to human connection rather than replacements. They might assist people in practicing social skills before venturing into scarier real-world interactions.

But the evidence suggests they can't replace the real thing. The loneliness epidemic won't be solved by clever algorithms, no matter how sophisticated they become. At some point, humans need other humans, with all the messiness, risk, and genuine connection that entails.

Sometimes there are no shortcuts, even very clever technological ones. The good news is that the real solution, rebuilding human social infrastructure, would improve lives in ways that go far beyond addressing loneliness. The bad news is that it's much harder than downloading an app.

Reference: Wheatley T, et al. (2025). Can AI really help solve the loneliness epidemic? Trends in Cognitive Sciences. doi: 10.1016/j.tics.2025.08.002 | PMID: 40962648

Disclaimer: The image accompanying this article is for illustrative purposes only and does not depict actual experimental results, data, or biological mechanisms.