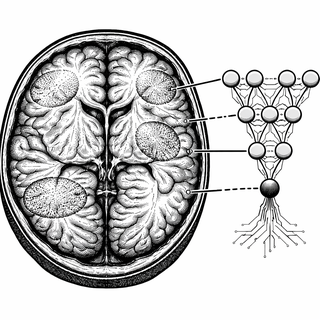

Picture this: you've got a super-smart AI that can look at brain scans and accurately identify autism. Sounds great, right? There's just one tiny problem. When you ask it "how did you know?", it basically shrugs and says "I just do." That's what scientists call a black box model, and it's about as useful to a clinician as a Magic 8-Ball with a medical degree.

A new study in EClinicalMedicine decided to build something better: an AI that not only detects autism from brain scans but actually shows its work. Think of it as the difference between a student who gets the right answer and a student who can explain their reasoning. Turns out, that difference matters a lot when we're talking about diagnosing actual humans.

The "Trust Me, I'm An Algorithm" Problem

Right now, diagnosing autism spectrum disorder (ASD) is a pretty subjective process. Clinicians observe behaviors, interview families, run through checklists. It works, but it's not exactly objective, and different evaluators can reach different conclusions. Brain imaging combined with machine learning seems like an obvious solution. Brains don't lie, and computers don't have bad days, right?

Well, here's the catch. The best-performing machine learning models tend to be the most opaque. They're processing millions of data points through layers of mathematical transformations that even their creators can't fully trace. When a neural network says "this brain scan indicates autism," it's not telling you which brain regions mattered, which patterns it noticed, or whether it's picking up on something meaningful or just some artifact in the data.

Would you trust a diagnosis like that? Would any responsible doctor? The answer is clearly no, and that's why interpretable AI has become such a hot topic in medical applications.

Opening the Black Box with ABIDE

The researchers turned to ABIDE, a massive publicly available dataset of functional MRI scans from individuals with and without autism. It's exactly the kind of large, diverse dataset you need to train a machine learning model that might actually generalize to new patients.

But here's where they got clever. Instead of just maximizing accuracy, they built explainability into the model from the ground up. The approach identifies not just whether a brain looks autistic, but which specific features drove that classification. It's like getting a receipt for your diagnosis.

The model points to particular brain regions and connectivity patterns that influenced its decision. So instead of a mystical "autism: yes" verdict, clinicians get something more like "autism: yes, and here's why I think so, look at these specific areas." That's a conversation a doctor can actually work with.

The Usual Suspects Show Up

When the researchers analyzed which brain regions consistently mattered for the ASD classification, they found something reassuring: the model was focusing on areas that actually make biological sense. We're talking about regions involved in social cognition, language processing, and sensory integration. These are exactly the functions that are clinically affected in autism.

This is a big deal. If the model had been flagging random brain regions with no connection to autism's known symptoms, that would be a red flag. It might mean the algorithm was picking up on confounding factors, like differences in how the scans were taken, rather than actual neurobiological differences. But no, the AI was essentially rediscovering what decades of autism research had already suggested, just from the raw data.

Even better, these patterns were spatially consistent across subjects. We're not talking about noise or random variation. These appear to be reliable biomarkers that could potentially be used in real clinical settings.

Why This Actually Matters for Real People

An explainable model does double duty. First, it can support clinical diagnosis by providing an objective, reproducible assessment. But second, and maybe more importantly, it helps advance our understanding of what autism actually looks like in the brain. Every time the model makes a prediction, it's generating hypotheses about the neurobiology of ASD that researchers can investigate further.

There's also the trust factor. Clinicians are, rightfully, skeptical of algorithmic diagnoses they can't understand. But when a model says "I think this person has ASD because of altered connectivity in these social cognition regions," a doctor can evaluate whether that reasoning makes sense. They can integrate it with their clinical judgment rather than blindly accepting or rejecting a black box verdict.

Looking ahead, this approach might help identify autism subtypes based on neural profiles, which could eventually lead to more personalized interventions. Because autism isn't one thing; it's a spectrum, and understanding the neural diversity within that spectrum is the next frontier.

Reference: Bhattacharyya S, et al. (2025). Identification of critical brain regions for autism diagnosis from fMRI data using explainable AI: an observational analysis of the ABIDE dataset. EClinicalMedicine. doi: 10.1016/j.eclinm.2025.103452 | PMID: 41181843

Disclaimer: The image accompanying this article is for illustrative purposes only and does not depict actual experimental results, data, or biological mechanisms.