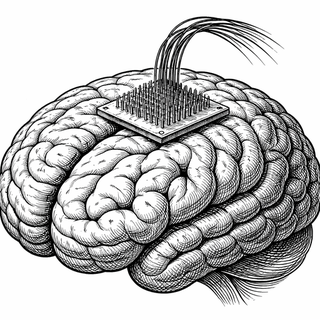

Under a microscope, a microelectrode array looks almost delicate - a tiny bed of needles, each thinner than a human hair, glinting like some miniaturized fakir's mat designed for neurons. Implanted into the motor cortex, these silicon slivers eavesdrop on the electrical chatter of brain cells that once orchestrated speech. And in one remarkable study, they picked up a conversation that nobody expected to hear.

Trapped Inside, Still Broadcasting

Here's the thing about locked-in syndrome: your mind is running at full speed while your body has essentially clocked out. For people with advanced amyotrophic lateral sclerosis (ALS), the progression can be brutal. First the limbs go. Then speech. Eventually, even the ability to blink - the last lifeline for communication - can disappear. You're conscious, aware, thinking complete thoughts, but you've got no output channel. It's like having a fully loaded smartphone with a shattered screen, no speakers, and a broken touch display.

For one participant in a new study published in Cell Reports, this was daily life. They had long-standing anarthria (complete inability to speak), locked-in syndrome, and depended on a ventilator to breathe. Previous brain-computer interface (BCI) speech studies had worked with people who still had some vocal ability - they could attempt to speak, even if the words came out garbled. This person couldn't produce sound at all. The question was bold: could a computer still decode what their brain was trying to say? (Jude et al., 2026)

Reading the Brain's Draft Folder

The research team, a collaboration spanning BrainGate and multiple institutions, implanted intracortical microelectrode arrays into the participant's motor cortex - the brain region that normally sends marching orders to your lips, tongue, and vocal cords. Even though those commands hadn't reached their destination in years, the neural patterns were still there. Think of it as your brain writing emails that never get sent. The drafts folder? Still full.

And the results were legitimately exciting. The team could decode phonemes (the smallest units of speech sound), individual words, and higher-order language units at rates well above chance. The brain hadn't forgotten how to speak. It was still rehearsing.

Now, before anyone starts envisioning fluent, real-time conversation, a reality check: sentence-level decoding accuracy was lower than what's been achieved with participants who have dysarthria (impaired but not absent speech). Previous BCI work has hit some jaw-dropping benchmarks - a 2023 Nature study demonstrated 62 words per minute with a 23.8% word error rate on a 125,000-word vocabulary (Willett et al., 2023), and a 2024 study in the New England Journal of Medicine reported word error rates consistently below 5% (Card et al., 2024). This study didn't match those numbers, but that's not really the point.

Why This Matters More Than the Numbers Suggest

The point is that it worked at all.

Look. Every prior speech BCI demonstration relied on participants who could still attempt to vocalize. There was always this nagging question hovering over the field: what happens when the motor system has been silent for years? Does the neural code for speech degrade? Does the brain's speech machinery rust from disuse?

This study says no. The signal persists. The brain keeps drafting those unsent emails, and we can learn to read them.

The researchers also mapped out a roadmap for improvement. One key insight: for someone with long-standing anarthria, a purely phoneme-by-phoneme decoding approach might not cut it. Instead, they suggest decoding linguistic units directly from the middle precentral gyrus - essentially reading the brain's intent at a higher level rather than trying to reconstruct speech sound by sound. They also propose closed-loop training, where the participant gets real-time feedback to help sharpen their imagined speech patterns. A recent study on inner speech in motor cortex supports this direction, showing that imagined sentences can be decoded in real time (Cell, 2025).

The Bigger Picture

A comprehensive review in Nature Reviews Neuroscience noted that the speech neuroprosthesis field has progressed from basic demonstrations to clinically viable systems in just a few years (Metzger et al., 2024). This latest study pushes the boundary further - into territory where patients have the most to gain and the fewest alternatives.

For the roughly 30,000 Americans living with ALS, and the subset who progress to locked-in syndrome, this isn't a tech novelty. It's a potential lifeline back to the most human thing we do: talk to each other.

The brain wants to speak. Now we just need to get better at listening.

References:

-

Jude, J.J., Haro, S., Levi-Aharoni, H., et al. (2026). Decoding intended speech with an intracortical brain-computer interface in a person with long-standing anarthria and locked-in syndrome. Cell Reports, 44(4), 117162. DOI: 10.1016/j.celrep.2026.117162 | PMID: 41920737 | PMC12363778

-

Willett, F.R., Kunz, E.M., Fan, C., et al. (2023). A high-performance speech neuroprosthesis. Nature, 620, 1031-1036. DOI: 10.1038/s41586-023-06377-x

-

Card, N.S., Wairagkar, M., Iacobacci, C., et al. (2024). An accurate and rapidly calibrating speech neuroprosthesis. New England Journal of Medicine, 391, 609-618. DOI: 10.1056/NEJMoa2314132 | PMC11030484

-

Metzger, S.L., Littlejohn, K.T., Silva, A.B., et al. (2024). The speech neuroprosthesis. Nature Reviews Neuroscience, 25, 473-492. DOI: 10.1038/s41583-024-00819-9

-

Dougherty, M.E., Nguyen, T., et al. (2025). Inner speech in motor cortex and implications for speech neuroprostheses. Cell. DOI: 10.1016/j.cell.2025.06.014

Disclaimer: The image accompanying this article is for illustrative purposes only and does not depict actual experimental results, data, or biological mechanisms.