Without understanding how your brain's wiring translates into actual activity, we're essentially trying to read a city map and predict traffic patterns. Sure, you know where the roads go, but that tells you nothing about the 5 PM gridlock on the highway or why everyone suddenly heads to Costco on Saturdays.

That's the problem neuroscientists have been wrestling with for years. We've gotten remarkably good at mapping the brain's "roads" - the functional connections between different regions. But translating that wiring diagram into predictions about what the brain actually does? That's been the missing piece.

Enter the Attractor: Your Brain's Gravitational Wells

A research team led by scientists at the University of Essen has just dropped a computational model that might finally bridge that gap. They call it fcANN - functional connectivity-based Attractor Neural Networks - and the name alone tells you these folks have embraced their inner nerd.

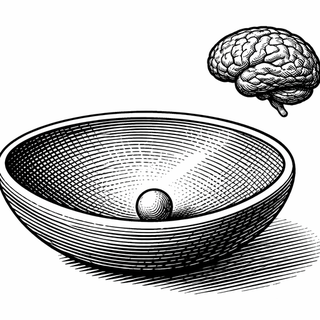

Here's the gist: imagine your brain's activity as a ball rolling around a landscape of hills and valleys. The valleys are "attractor states" - stable activity patterns your brain naturally settles into, like a marble finding the lowest point in a bowl. These aren't random; they represent meaningful configurations like "paying attention to something external" or "lost in thought about that embarrassing thing you said in 2014."

The fcANN model uses your brain's functional connectivity - basically, which regions tend to activate together - to predict where those valleys are and how the ball rolls between them.

Why Orthogonal Organization Is Actually Cool

The team tested their model across seven different neuroimaging datasets, covering everything from people lying in scanners doing nothing (harder than it sounds) to folks actively performing tasks to patients with brain disorders. And they found something the theorists had predicted but nobody had actually demonstrated: the brain's major attractor states are approximately orthogonal to each other.

Translation for those of us who haven't touched linear algebra since college: the brain's favorite activity patterns are maximally different from one another. It's like your brain deliberately organized its filing system so that "focused work mode" and "daydreaming about lunch" are as distinct as possible. No accidental cross-contamination between mental states.

This orthogonality isn't just a neat mathematical property - it's a signature of something called the Free Energy Principle, a theoretical framework developed by Karl Friston that tries to explain basically everything the brain does through the lens of minimizing surprise. The brain, according to this view, is constantly trying to be less wrong about the world, and these orthogonal attractors are an efficient way to organize that effort.

From Rest to Task to Disorder

What makes the fcANN framework particularly exciting is its versatility. The same model that predicts resting-state brain dynamics also works when you throw a cognitive task at someone. The attractor landscape shifts, new valleys emerge, and the brain's activity ball rolls to different places.

Even more intriguing: the model captures differences in brain disorders. When something goes wrong neurologically, the attractor landscape gets distorted. Understanding how it gets distorted could eventually help us understand why certain symptoms emerge and potentially how to nudge things back toward healthier configurations.

The attractor states themselves map onto neurobiologically meaningful patterns. The researchers identified configurations corresponding to what they call "internal context" (self-referential thought, the stuff the default mode network handles), "external context" (attending to the world), "action," and "perception." Your brain isn't just randomly bouncing between states - it's moving through a meaningful landscape of cognitive modes.

What This Means for the Future

Current neuroimaging analysis is largely descriptive - we see that region A activates during task B, we note correlations between regions, we publish papers. But description isn't explanation. The fcANN approach offers something more: a generative model that can actually predict dynamics from connectivity.

This has real implications for clinical neuroscience. If we can characterize how a patient's attractor landscape differs from healthy controls, we might eventually target interventions more precisely. Research into biomarkers for conditions like schizophrenia and depression has struggled partly because we've been looking at static snapshots rather than dynamic landscapes.

The model also connects beautifully to older ideas in computational neuroscience, particularly Hopfield networks - the associative memory models that John Hopfield developed in the 1980s (and recently won a Nobel Prize for). The brain really does seem to work like a content-addressable memory system, where partial or noisy inputs get "cleaned up" by rolling down to the nearest attractor. Your brain is constantly pattern-completing, and now we have a principled way to model that process at the whole-brain level.

The fcANN framework won't solve neuroscience overnight. But it represents exactly the kind of theory-driven, computationally grounded approach that might eventually let us move from describing what the brain does to actually understanding why.

References:

-

Englert R, Kincses B, Kotikalapudi R, et al. Functional connectivity-based attractor dynamics of the human brain in rest, task, and disease. eLife. 2026;13:RP98725. DOI: 10.7554/eLife.98725 | PMCID: PMC12999174

-

Friston K. The free-energy principle: a unified brain theory? Nature Reviews Neuroscience. 2010;11(2):127-138. DOI: 10.1038/nrn2787

-

Khosla M, Jamison K, Ngo GH, Kuceyeski A, Sabuncu MR. Machine learning in resting-state fMRI analysis. Magnetic Resonance Imaging. 2019;64:101-121. PMCID: PMC4854787

-

Hopfield JJ. Neural networks and physical systems with emergent collective computational abilities. Proceedings of the National Academy of Sciences. 1982;79(8):2554-2558.

Disclaimer: The image accompanying this article is for illustrative purposes only and does not depict actual experimental results, data, or biological mechanisms.