Confession: phase microscopy is hard to write about without sounding like a person trapped inside a grant application. It deals with invisible shifts in light, nanostructures thinner than your patience, and neural networks that are not, in fact, neurons. Still, this paper earns the effort because it asks a practical question - how do you image transparent living stuff quickly and precisely without hauling around a small chandelier of optics? [1]

The Part Where Light Gives Away the Secret

Most cells are terrible at being seen. Quantitative phase imaging, or QPI, gets around that by measuring how much a sample delays light rather than just how bright it looks. That delay tells you about thickness, structure, and refractive index - useful if you want to study live cells without dyes, stains, or other chemical costume changes [2,3].

Classic QPI works, but it has baggage. Many systems need multiple images, moving parts, careful alignment, and reconstruction software that can be slow or touchy. Less great if you want something compact, fast, and robust [1-4].

The "Neural" Bit Is Not About Neurons

The title invites a small misunderstanding, so let's clear that up early. This is not a paper about imaging neural circuits in a mouse doing philosophy. The "neural" part refers to the AI model used to reconstruct phase information. The biological demos are onion cells, a standard resolution chart, and custom fabricated test samples [1].

Honestly, I respect the onion here. It did not ask for fame.

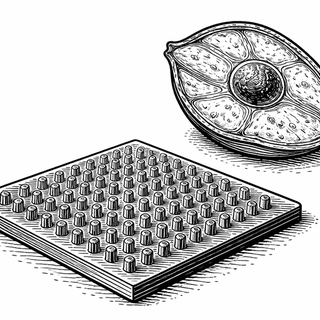

What the researchers built is a phase microscope that replaces bulky optics with a metasurface - a flat layer covered in tiny nanostructures that shape light very precisely. The design captures the information needed for phase recovery in a single shot, and a physics-informed neural network then corrects aberrations, alignment errors, and fabrication imperfections. In plain English: the hardware grabs a clever optical snapshot, and the software stops that snapshot from turning into nonsense [1].

The payoff is real. The system achieved better than 840 nm resolution at 74 frames per second, all with a thin optical layer instead of the usual pile of lab gear [1].

Why This Is More Than a Fancy Flat Lens

This paper matters because QPI already has a serious resume. It can track live-cell growth, estimate dry mass, and capture subtle structural changes without labels. Reviews over the past few years keep pointing to the same opportunity: combine smarter computation with better optics, and you get imaging that is faster, smaller, and more practical outside specialist setups [2-5].

That trend is exactly where this study lands. Recent work has pushed compact meta-microscopes, metasurface-based phase imaging, and even metalens endoscopy, all trying to shrink QPI from a bench-top diva into something portable and useful [3-5]. This paper goes a step further by making the acquisition single-shot and the reconstruction physics-aware, which is a good way to say the AI is less likely to hallucinate its way through optics class [1].

So What Could This Actually Change?

If this approach holds up and scales, the obvious win is portable, real-time imaging of transparent samples. Think live cells in ordinary labs, faster screening in biology, and smaller devices for inspecting tissues or doing endoscopy where space is scarce [1,4,5]. You do not always need prettier pictures. Sometimes you need reliable numbers while the biology is still happening.

There is also a quieter point here. By folding more of the optical trickery into a single nanostructured surface, the system reduces the need for elaborate alignment and multi-part hardware. Many brilliant imaging methods die young in the gap between "works beautifully in the paper" and "works on a random Wednesday in someone else's lab."

Of course, this is not the final boss of microscopy. The demonstrations here were controlled and modest. Onion cells are useful, but they are not brain tissue, tumors, or a squirming in vivo preparation. The authors are showing an enabling technology, not a turnkey clinical device [1].

Still, the idea is hard to ignore: take a flat optic, add physics-aware AI, remove some of the mechanical drama, and suddenly phase microscopy starts looking less like elite lab equipment and more like something that could travel. For a field built around measuring invisible delays in light, that is a pretty visible step.

References

- Lee GY, Kim C, Gopakumar M, et al. Neural phase microscopy with metasurface optics for real-time and nanoscale quantitative phase imaging. Nature Communications. 2026;17:1411. DOI: https://doi.org/10.1038/s41467-025-68151-z

- Park J, Bai B, Ryu D, et al. Artificial intelligence-enabled quantitative phase imaging methods for life sciences. Nature Methods. 2023;20:1645-1660. DOI: https://doi.org/10.1038/s41592-023-02041-4

- Huang Z, Cao L. Quantitative phase imaging based on holography: trends and new perspectives. Light: Science & Applications. 2024;13:145. DOI: https://doi.org/10.1038/s41377-024-01453-x PMCID: https://pmc.ncbi.nlm.nih.gov/articles/PMC11211409/

- Priscilla N, Sulejman SB, Roberts A, Wesemann L. New Avenues for Phase Imaging: Optical Metasurfaces. ACS Photonics. 2024;11(8):2843-2859. DOI: https://doi.org/10.1021/acsphotonics.4c00359

- Shanker A, Fröch JE, Mukherjee S, et al. Quantitative phase imaging endoscopy with a metalens. Light: Science & Applications. 2024;13:305. DOI: https://doi.org/10.1038/s41377-024-01587-y

Disclaimer: The image accompanying this article is for illustrative purposes only and does not depict actual experimental results, data, or biological mechanisms.