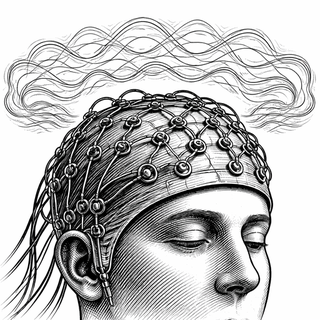

Let's play a game. Imagine you have to send a text message using only your eyebrows, a cap full of electrodes, and a brain that refuses to speak in clean, spreadsheet-ready sentences. That is the challenge of a non-invasive brain-computer interface, or BCI. You are trying to turn the brain's electrical weather into something a machine can understand - without opening the skull, which most people sensibly rank low on the wellness menu.

That is the setup for a 2025 review by Wang and colleagues, who focus on two trends arriving together: better AI for decoding brain signals, and better flexible electronics for collecting them (Wang et al., 2025). The point is straightforward. If non-invasive BCIs are going to leave the lab, they need both smarter software and less annoying hardware.

Non-invasive BCIs usually rely on signals like EEG, which measures electrical activity from the scalp. That sounds tidy until you remember what the signal has to survive: skull, skin, motion, sweat, electrical interference, and the general chaos of being attached to a blinking human. The result is useful, but noisy - more "one violin in a subway station" than crystal-clear confession.

This is where recent decoding work matters. Wang's review highlights deep learning, multimodal fusion, and closed-loop systems as major drivers of progress. A 2024 review in Journal of Neural Engineering found that deep representation learning is helping BCIs pull structure out of messy EEG data, though generalization across tasks and people remains a stubborn problem (Guetschel et al., 2024). Your brain is not my brain, and neither of them got the memo about standardization. BCIs still tend to need calibration and retraining, which is not ideal when the goal is practical assistive tech.

The Hat Needs Better Manners

Software gets a lot of headlines, but the hardware side is doing equally important work. A non-invasive BCI lives or dies by contact quality, comfort, and stability. If the electrodes shift, dry out, or irritate skin, people stop using the thing. Fair enough.

A 2025 review in npj Biomedical Innovations describes how flexible brain electronic sensors are improving wearability through softer materials and better scalp conformity, all aimed at gathering cleaner signals with less fuss (Xue et al., 2025). It is not flashy work, but it may decide whether BCI stays a demo or becomes something you would actually tolerate during rehab or home use.

And that matters because non-invasive BCIs are often the practical option. A 2024 Nature Medicine feature noted growing interest in non-invasive systems because implants are expensive, risky, and unlikely to become everyday tools for broad patient groups anytime soon (Webster, 2024). If invasive BCIs are the Formula 1 cars, non-invasive BCIs are trying to become the reliable hatchbacks.

Where This Could Actually Help Real Humans

The clearest near-term use case is rehabilitation. BCIs can detect an intention to move and pair that intention with feedback from a screen, a robotic device, or electrical stimulation. That pairing may help reinforce damaged brain networks after stroke. A 2024 systematic review and meta-analysis reported improved upper-extremity motor outcomes after stroke, especially when BCIs were used with structured rehabilitation (Zhang et al., 2024). Nobody should oversell that into a "mind-reading miracle cap," but the direction is real.

The larger promise goes beyond rehab. Better decoding and better sensors could support communication for people with severe paralysis, hands-free control in demanding environments, and more continuous brain-state monitoring. The review also points to multimodal systems that combine EEG with signals like fNIRS. If one channel is a fuzzy whisper, a second clue can help.

The Part Where We Stay Adults About It

The field still has serious problems. Signal quality changes from person to person. Real-world environments are full of interference. Long sessions can be uncomfortable. Models that shine on one dataset can sulk when faced with a different headset or a different clinic. Wang and colleagues are clear that long-term reliability, generalization, and hardware-software co-optimization remain unfinished business.

That is probably the healthiest way to view non-invasive BCIs right now. Not as magic. Not as vapor. More like a weather system finally beginning to organize. The algorithms are getting less gullible. The sensors are getting softer. And the dream is maturing from "look, the cursor moved" into something more grounded: technology that can meet the brain where it actually lives - noisy, variable, biological, and a little dramatic.

If that works, the payoff is not just cooler gadgets. It is a quieter kind of freedom: a way for thought to become action when the usual routes have been blocked. That is a big idea, even if it currently arrives wearing a very strange hat.

References

Wang S, Song X, Song X, Gu Y, Cong Z, Shen Y, Yu L. Non-Invasive Brain-Computer Interfaces: Converging Frontiers in Neural Signal Decoding and Flexible Bioelectronics Integration. Nano-Micro Letters. 2025. DOI: 10.1007/s40820-025-02042-2.

Guetschel P, Ahmadi S, Tangermann M. Review of deep representation learning techniques for brain-computer interfaces. Journal of Neural Engineering. 2024;21(6). DOI: 10.1088/1741-2552/ad8962.

Xue J, Zhong W, Zhao X, et al. Flexible brain electronic sensors advance wearable brain-computer interface. npj Biomedical Innovations. 2025. PMCID: PMC13055064.

Zhang M, Zhu F, Jia F, Wu Y, Wang B, Gao L, Chu F, Tang W. Efficacy of brain-computer interfaces on upper extremity motor function rehabilitation after stroke: A systematic review and meta-analysis. NeuroRehabilitation. 2024;54(2):199-212. DOI: 10.3233/NRE-230215.

Webster P. The future of brain-computer interfaces in medicine. Nature Medicine. 2024;30:1508-1509. DOI: 10.1038/d41591-024-00031-3.

Disclaimer: The image accompanying this article is for illustrative purposes only and does not depict actual experimental results, data, or biological mechanisms.