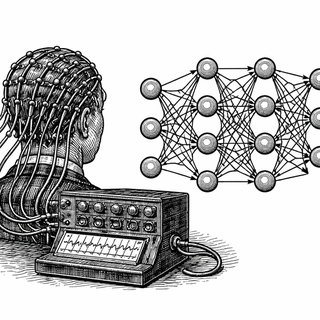

Ten years ago, EEG decoding often meant hand-picking features like an anxious person assembling a cheese board - frequency bands here, scalp channels there, and a quiet prayer that the brain would cooperate. Now the trend is to hand everything to deep learning and hope the machine invents electrophysiology from scratch. Fu and colleagues propose a less theatrical option: build a neural network that already knows a bit about how EEG works.[1]

The brain's crackly group chat

EEG records the electrical activity of the brain from the scalp. It is cheap, portable, and about as tidy as a live microphone in a windy pub. That makes it useful for brain-computer interfaces, or BCIs, where the aim is to turn neural signals into commands, predictions, or feedback without opening anyone's skull. A lovely ambition. The signal, naturally, has other ideas.[2]

This is the central annoyance of EEG. Classical methods can be fairly interpretable, but often leave performance on the table. Deep networks can do better, yet they tend to explain themselves with the charm of a locked filing cabinet.

A black box with some manners

The new paper introduces a function predefined convolutional neural network, or FPCNN.[1] The phrase sounds as if it should come with a government form, but the concept is sensible. Instead of using a completely generic convolutional layer, the authors build one around features neuroscientists already care about, especially frequency content and channel weighting.

So the model is not merely told, "Find something interesting." It is nudged toward the kinds of spatial and spectral patterns that matter in spontaneous EEG. That gives the parameters clearer physical meaning, which is rather useful when you would like to know whether the machine has discovered a brain signal or simply developed a hobby.

The authors also add a trainable quadrature detector to help capture phase-related changes. Phase sounds technical because it is technical, but the idea is simple enough: timing matters. In neural rhythms, when something happens can matter almost as much as what happens. The network is being taught not just to listen for notes, but to notice the rhythm section.

Small gains, serious implications

In the authors' reported results, FPCNN beat comparison methods by 2.09%, 3.08%, and 3.41% across three spontaneous EEG datasets.[1] Those numbers will not make Hollywood write a screenplay, but EEG decoding is not a field that gives away percentage points for free. Small gains here are usually earned the hard way.

The efficiency claim may be just as important. The paper reports CPU-only training and testing times of 67.96 and 19.36 seconds per epoch.[1] That matters because an algorithm that works only when attached to expensive hardware is less a practical tool and more an elaborate cry for funding.

Why anyone outside the lab should care

If this line of work holds up, it points to a better style of noninvasive BCI. Not brute-force deep learning. Not purely hand-crafted signal processing. Something in between: models that learn, but do so with a bit of prior knowledge and a little less arrogance.

That matters because EEG BCIs still face the usual gremlins - noisy data, person-to-person variability, and the awkward fact that your brain does not produce the same clean signal every session like a well-trained office printer.[2][3] Better decoding and lower computational cost could help move these systems into rehabilitation, assistive technology, and more portable real-world settings.

There is also a scientific upside. Interpretable models can show which channels and frequencies matter, rather than merely announcing a result and expecting applause. That makes them more useful for understanding the brain, not just extracting numbers from it.

Recent work shows why this is worth the effort. EEG-based BCIs are improving in continuous control tasks,[4] and researchers have even reported real-time robotic finger control from EEG alone.[5] The field is inching forward, which is how serious progress usually arrives - no brass band, just fewer mistakes.

One eyebrow raised

The catch is that the accessible source for this paper is the abstract, so the full methods and validation details still matter quite a lot.[1] EEG research has a long tradition of looking splendid until somebody tries it on a new dataset and the magic leaves by the side door.

Still, the direction is promising. A neural network that starts with some built-in respect for neurophysiology may be more useful than one that treats the brain as just another pile of numbers. Which, for both neuroscience and machine learning, is probably overdue.

References

- Fu B, Li F, Li J, et al. Improved spontaneous EEG signal decoding efficiency by function predefined convolutional neural network. IEEE Transactions on Neural Networks and Learning Systems. 2026. DOI: https://doi.org/10.1109/TNNLS.2026.3652882. PubMed: https://pubmed.ncbi.nlm.nih.gov/41706793/

- Mushtaq F, Welke D, Gallagher A, et al. One hundred years of EEG for brain and behaviour research. Nature Human Behaviour. 2024;8(8):1437-1443. DOI: https://doi.org/10.1038/s41562-024-01941-5. PubMed: https://pubmed.ncbi.nlm.nih.gov/39174725/

- Jin W, Zhu X, Qian L, et al. Electroencephalogram-based adaptive closed-loop brain-computer interface in neurorehabilitation: a review. Frontiers in Computational Neuroscience. 2024;18:1431815. DOI: https://doi.org/10.3389/fncom.2024.1431815. PMCID: https://pmc.ncbi.nlm.nih.gov/articles/PMC11449715/

- Forenzo D, Zhu H, Shanahan J, Lim J, He B. Continuous tracking using deep learning-based decoding for noninvasive brain-computer interface. PNAS Nexus. 2024;3(4):pgae145. DOI: https://doi.org/10.1093/pnasnexus/pgae145. PMCID: https://pmc.ncbi.nlm.nih.gov/articles/PMC11060102/

- Ding Y, Udompanyawit C, Zhang Y, He B. EEG-based brain-computer interface enables real-time robotic hand control at individual finger level. Nature Communications. 2025;16:5401. DOI: https://doi.org/10.1038/s41467-025-61064-x. Nature: https://www.nature.com/articles/s41467-025-61064-x

Disclaimer: The image accompanying this article is for illustrative purposes only and does not depict actual experimental results, data, or biological mechanisms.